Jan Chong, Ph.D., wrote her dissertation on "Software Development Practices and Knowledge Sharing: A Comparison of XP and Waterfall Team Behaviors". I came across a recorded presentation of her discussing her dissertation at The Research Channel here:

http://www.researchchannel.org/prog/displayevent.aspx?rID=16075&fID=345

Here is the description of the recorded presentation:

My dissertation research explores knowledge sharing behaviors among two teams of software developers, looking at how knowledge sharing may be effected by a team's choice of software development methodology. I conducted ethnographic observations with two product teams, one which adopted eXtreme Programming (XP) and one which used waterfall methods. Through analysis of 808 knowledge sharing events witnessed over 9 months in the field, I demonstrate differences across the two teams in what knowledge is formally captured (say, in tools or written documents) and what knowledge is communicated explicitly between team members. I then discuss how the practices employed by the programmers and the configuration of their work setting influenced these knowledge sharing behaviors. I then suggest implications of these differences, for both software development practice and for systems that might support software development work.

Jan's full biography at the time of her dissertation:

Jan is a doctoral candidate in the Department of Management Science and Engineering at Stanford University. She is affiliated with the Center for Work, Technology and Organization. Her research interests include collaborative software engineering, agile methods, knowledge management and computer supported collaborative work. Jan holds a B.S. and an M.S. in Computer Science from Stanford University.

Her complete dissertation is available here: http://www.amazon.com/Knowledge-Sharing-Software-Development-Comparing/dp/3639100840

Highlighted Slides from Recorded Presentation

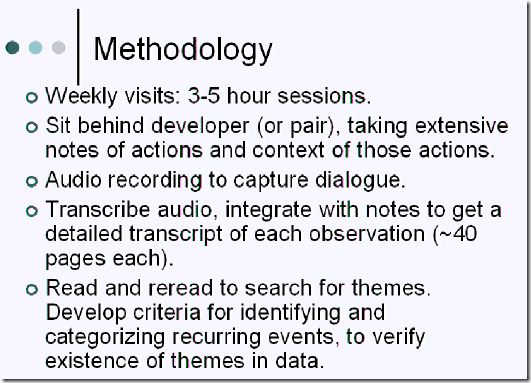

It's nice to see a formal study that compares these different styles of work. Jan spent time observing both teams for about 9 months. Here is a bit more about her methodology.

Study Methodology

Team Communication Styles

First, for the XP team, communication is more open, faciltated by information radiators and an open workspace:

The waterfall team has team members who work alone, in their own cubicles, and who communicate primarily through an online chat program. Thus, they sometimes "broadcast" information to others inside of the chatroom:

Observed Events and Data Coding

Through analyzing her recordings and notes, Jan classified all the different knowledge-transfer events into the following categories:

Knowledge Seeking Behaviors

This slide compares the actions taken by teammates in explicitly asking for knowledge transfer from others:

Knowledge Offering and Relevance

Below Jan has categorized types of communication that are offered and their respective relevancies.

Recorded Knowledge

It's very interesting to note that the waterfall team recorded knowledge far more for the "long-term", percentage-wise than on the XP team. What is not clear, however, is whether this means that the XP teams simply recorded more personal items in addition to the same type of long-term items or whether they leave out certain long-term items that waterfall teams recorded properly.

Summary Slides

The following three slides are her concluding slides:

Review and Analysis

I'm about to join a great team for an agile project that will be built using Scrum & XP practices. This opportunity is very exciting for me. That excitement is born out of my own "in the trenches" experience and observations on both Agile/Scrum/XP and waterfall. If you're reading this and unfamiliar with the differences between agile and waterfall, I recommend you take both of them for a "test drive" by reading or listening to my article entitled "From Waterfall to Agile Development in 10 Minutes: An Introduction for Everyone". Also read "Don't Get Drowned by Waterfall: Break out the Delusion" and "D = V * T : The formula in software DeVelopmenT to get features DONE"

If you cannot tell by now, my preference is for agile development and not waterfall. Waterfall, at least in its pure form, as you can read in the first article, is a mistake and always has been a mistake for software systems development. While Jan stops short of claiming a preference for one style of development or the other in her analysis, it's important to note that this is because that was not her intention. She is working on improving software methodology as a whole, and seeks to synthesize best practices from actual empirically observed behavior and data.

How Useful is the Long-Term Design Documentation by the Waterfall Team?

Jan observed that the waterfall teams had members who worked alone and went "back to the code" when they needed to understand something or had to work on a new module they had not worked on before. What I'm not clear on is whether she meant "automated test case code" or "implementation code". She also noted that the XP team members consulted one another more often about how things work prior to looking at code. She also noted that the waterfall teams created a higher percentage of recorded knowledge about the long-term aspects of the project. I am assuming this meant written documentation judging by her comments on video.

Question: How often the waterfall developers actually refer back to those long-term design documents, and which of those documents did they originally anticipate being useful to other developers?

The reason I would ask this is that she already noted that the waterfall team members spent a lot of time reading code, and also reading CVS check in messages when others checked in changes, but she didn't address whether they (or anyone) reads the long-term design documents for any useful purpose.

Experience Highest Communication Bandwidth via Face-to-Face at Whiteboard Collaboration

It has been my personal experience as a developer and architect that when working more closely, in a collaborative, open workspace, with other members of a team, I do not need to refer to implementation code or documentation as often anyway. Instead, we rely more a constantly evolved shared language, basic metaphors, automated test cases, and whiteboards to communicate the "gist" of how something works, then we refer to detailed implementation code as soon as we need the details or pinpoint a trouble area. Scott Ambler has written extensively on the subject on agile documentation and communication. Here is a chart he produced based on a survey about the most effective forms of communication:

Source: http://www.agilemodeling.com/essays/agileDocumentation.htm

As you can see, paper documentation is by far the absolute worst form of communication. Face-to-face whiteboard is the most effective. Kevin Skibbe, a friend that I used to work with, is the most effective whiteboard communicator I know. What he explained to me was that when two, or more, people try to communicate via the whiteboard they must focus on developing a shared mental model. Even when it's just face-to-face communication, without a whiteboard, both people are still maintaining independent, non-shared mental models of what the other person is thinking.

Building Long-Term Executable Knowledge / Documentation via Automated Tests

One measurement I don't explicitly noted is the notion of "Executable Knowledge", or more frequently called "Executable Specifications". Scott Ambler writes about this here: http://www.agilemodeling.com/essays/executableSpecifications.htm, and Mary Poppendieck writes about it in her books and presentations: http://www.poppendieck.com/

I suggest that teams, whether waterfall or agile, incorporate this practice into their development in order to produce fewer defects, increase explicit knowledge in the code-base, and reduce the need to continually read implementation code.

We should first think about software systems in light of their intrinsic nature and in terms of the change process required to modify or enhance them with maximum ability to produce the desired result. That result might be faster ROI in the market, increased product quality, or what have you.

What we never want to do however, is result in broken functionality after we release. This is why we build regression test suites that can be executed at will to confirm as much as computationally possible of the encoded knowledge about the business domain and user requirements.

This is how Test-Driven-Development works. Here is how Ambler depicts this view:

That is a nice technical view of things, but what about the view from the business side? Ambler presents a written form of the requirement that would be provided to developers from a business analyst in the article linked above.

Waterfall is Always Wrong Compared to Agile for Building a New System

I'm going to assume here a definition of waterfall that is primarily the standard sequential approach where requirements come first, followed by, detailed design, development, (test, drop scope, rework) (repeat prior three as necessary until completion), and deployment and maintenance.

If a project is about developing a completely new product from the ground up, then adopting the sequential model for a system of any degree of complexity beyond perhaps an estimated month of duration is simply calling for pain. I'm not going to explain why in this article, but I refer you to the three articles I wrote above for complete explanation. But, to summarize:

When you begin a moderate to large custom development project, there are many requirements you simply cannot know until you have started to develop a subset of the entire envisioned project and that subset makes its way into the hands of the critical stakeholders like the project owner and the target users.

For more evidence, refer to Craig Larman's research and explanation about the history of waterfall in this article: http://www.highproductivity.org/r6047.pdf

Waterfall is Still Wrong for Enhancing an Existing System

However, suppose a software system is already released and "in production", should a team then use waterfall techniques to build additional features?

I believe the answer is no. The reason is reflected above in the TDD diagram. You want to reduce the impact of changes and build up a suite of regression tests as you develop the solution. And, you want to seek feedback as early as possible to reduce time spent working on incorrect or undesired functionality.

Waterfall is Especially Wrong for Rebuilding an Existing System

In my experience, and I've been through it a couple of times now, waterfall is notoriously wrong when you are asked to rebuild an existing system in a new technology. The reason is that often the project sponsor will state little more than the fact that they want the existing system functionality essentially duplicated. Unfortunately, much time will be wasted by the team if it tries to clone piece-by-piece the existing product.

A much better approach is to use the existing system as a very high fidelity model. It should then use iterative and incremental agile practices to deliver features that are focused on making and keeping the project potentially shippable as soon as possible. This ensures a complete vertical "slice" of functionality through all the application's layers gets built as soon as possible. To learn more about these practices, see the screen-cast series Autumn of Agile at http://www.autumnofagile.net. The first episode gives a comprehensive and very compelling explanation of why agile presents better business value than waterfall. My article "Don't Get Drowned by Waterfall: Break out the Delusion" references a few key slides from that series.

The Embarrassment of Waterfall's Persistence

Waterfall continues to exist in the technology world because it sounds easy to understand on the surface, but everyone also realizes the intrinsic contradiction in software development: requirements constantly change. I don't blame product owners or business people for waterfall's persistence. I blame ourselves, the developers and project managers. It is our fault for not being more responsive to changing environmental conditions and changing requirements.

But, balancing the ability to change on demand with the requirement to remain stable at all other times is what agility is all about. That is why agile focuses on rigorous empirical testing, visual monitoring, and continuous feedback. That is why automated tests as executable specifications speed up the ability to change while simultaneously increasing quality and confidence.

Stay tuned for my next article which will update the "age old" building construction metaphor that says building is software is like building a building. In many ways it is, but I will make key distinctions that enable agility!

Until then, stay agile, not fragile.

0 comments:

Post a Comment